February 10, 2026

The recent announcement that Anthropic will become the “official thinking partner” for Williams Racing, supported by Atlassian, marks a departure from the standard tech-sponsorship playbook. While branding is part of the deal, the focus on engineers using Claude for trackside operations suggests a pivot in how AI companies want to be perceived.

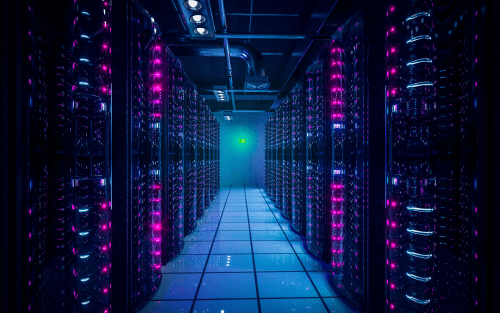

For the data center industry and the AI sector, this isn’t just another sports marketing play. It is a real-world stress test for latency, edge computing, and the physical constraints of modern infrastructure.

From Generative Hype to Functional Utility

For the last two years, the AI narrative has been dominated by “magic”—the ability to generate text or images from thin air. However, the Anthropic/Williams partnership shifts the focus to operational utility. In an F1 environment, “good enough” data isn’t useful. Decisions on tire degradation, fuel loads, and aero-elasticity happen in seconds.

By positioning Claude as a “thinking partner” for engineers, Anthropic is signaling that its models are ready for high-consequence, low-latency environments. This moves the needle for AI from being a back-office productivity tool to a core component of the industrial “stack.” For data center providers, this means the demand profile is changing. Clients are no longer just looking for bulk compute to train models; they need highly responsive, high-availability environments that can support real-time engineering feedback loops.

The Shift to “Actionable” Inference

Most of the data center growth in the past 24 months has been driven by massive training clusters—thousands of GPUs sitting in hyperscale facilities crunching historical data. But F1 is an inference-heavy sport.

“We are seeing a strategic pivot from the ‘Training Era’ to the ‘Deployment/Inference Era.’ As companies like Anthropic move into high-velocity sectors like F1, the infrastructure burden shifts from massive centralized hubs to distributed, high-density nodes.”

As AI moves into live sports and manufacturing, the “lag” of a centralized data center becomes a competitive liability. But you cannot run a real-time race strategy for a car in Monaco from a server farm in Iowa.

We are seeing the early stages of a move toward Edge AI clusters. To support a team like Williams, the compute doesn’t just need to be powerful; it needs to be physically or logically closer to the data source. This validates the trend toward regional data center hubs that can offer sub-10ms latency for critical AI-driven decisions.

Impact on Data Center Design: Density and Thermal Management

F1 is an exercise in extreme cooling and power density—the exact challenges currently facing the data center industry. The partnership highlights a looming physical reality: as AI becomes more integrated into real-time operations, the hardware required to run these models is getting hotter and more power-hungry.

- Rack Density: We are moving away from the standard 5–10kW racks. AI-specific deployments, particularly those used for complex simulations, are pushing toward 50kW or even 100kW per rack.

- Liquid Cooling: Just as an F1 car relies on sophisticated thermal management, the data centers supporting these “thinking partners” are transitioning to liquid-to-chip cooling to maintain performance.

The numbers back this up. Global committed data center capacity has grown 7x since 2019, and continues to grow strongly, as high-density AI layouts dominate investment commitments. The “F1-ification” of AI will only accelerate the demand for “AI-ready” facilities that can handle the massive power draws required for real-time model interaction.

The Compute Arms Race: Data Centers as the New Engines

Anthropic joining Google (McLaren), Oracle (Red Bull), and IBM (Ferrari) on the F1 grid confirms that compute has replaced oil as the sport’s primary fuel. This creates a new “credibility” metric for data center providers.

If Oracle’s cloud helps Max Verstappen win a World Championship, it proves the reliability of their data centers to every enterprise on earth. If Claude helps Williams—a team currently in a rebuilding phase—climb the grid through superior data synthesis, it proves that AI-driven “thinking” is the ultimate competitive advantage. This indicates that Williams aren’t just betting on an algorithm; they are betting on the uptime and reliability of the infrastructure behind it.

For data center operators, the narrative is no longer about being a “utility.” It’s about being a performance partner. If the AI goes down during a qualifying session, the car stays in the garage. This puts a premium on redundancy and power resilience that exceeds previous industry standards.

Efficiency and the Rise of Small Language Models (SLMs)

There is a common misconception that more AI always means more “everything”—more power, more space, more water. However, the constraints of a racing team (who must travel with their gear and operate within strict cost caps) suggest a move toward efficiency-first AI.

Anthropic’s challenge at Williams will likely involve optimizing Claude to run on limited local compute resources. This mirrors a broader trend in the data center industry: the rise of Small Language Models (SLMs). These are highly distilled, specialized versions of larger models that provide 90% of the utility at a fraction of the compute cost.

For the data center market, this could lead to a two-tier system:

- The Titans: Massive facilities for training the next generation of Claude.

- The Sprinters: Highly efficient, modular data centers designed specifically to run SLMs for local, real-time applications.

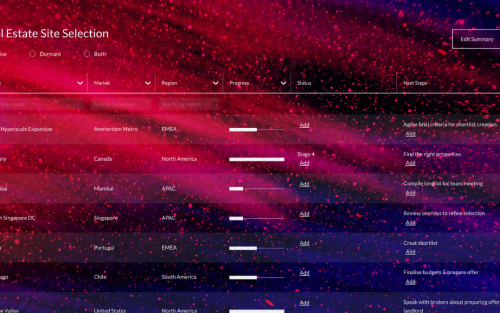

If your planning depends on how real‑time AI deployments are influencing delivery certainty and infrastructure planning, book a demo with our team to explore our Market Analytics, where we capture global data centre capacity by market and development stage.